Time is running out for ethicists to tackle very real robot quandries

By its nature, the Open Roboethics Initiative is easy to dismiss — until you read anything they’ve published. As we head toward a self-driving future in which virtually all of us will spend some portion of the day with our lives in the hands of a piece of autonomous software, it ought to be clear that robot morality is anything but academic. Should your car kill the child on the street, or the one in your passenger seat? Even if we can master such calculus and make it morally simple, we will do so only in time to watch a flood of household robots enter the market and create a host of much more vexing problems. There’s nothing frivolous about it — robot ethics is the most important philosophical issue of our time.

Excess Logic continues reposting interesting articles about hi-tech, startups and new technologies to draw attention to e-waste recycling of used computer, lab, test, data center, R&D, computer and electronic equipment. Please stop disposing used equipment into a dumpster. Recycle used electronics with Excess Logic for free.

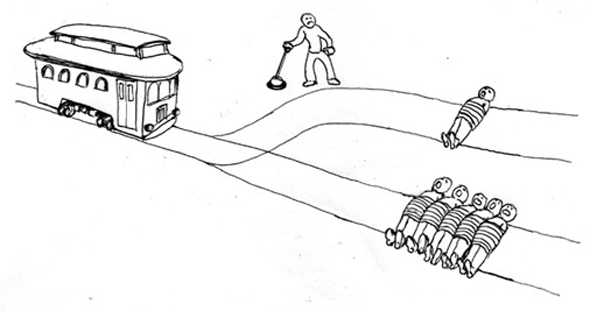

Many readers are probably familiar with the following moral quandary, which is not specifically associated with robotics: A train is headed for, and will definitely kill, five helpless people, and you have access to a lever that will change its track and direct it away from the five — and over another, lone victim instead. A grislier version asks you decide whether to push a single very large person in front of the train to bring it to a wet, disgusting halt, which makes it impossible to deny culpability for the single death, which is the crux of the moral problem. Obviously, five dead people is worse than one dead person, but you didn’t set the train moving in the first place, and pulling the lever (or pushing that poor dude) will insert you as a directly responsible actor in whatever outcome arises. This begs the question: If it’s possible for you to pull the lever in time, would your inaction also constitute direct responsibility for the five deaths that result?

Read how to recycle used electronics in San Jose, Santa Clara, Milpitas, Fremont

In normal, human life this stumper can be set aside quite well with the following argument: “Whatever.” That really isn’t as insensitive as it might seem, since a) The situation will almost certainly never actually arise, and b) We are not inherently responsible for anyone else’s actions. This means that the question of whether to kill the five or the one is ultimately academic, since any single person who actually makes the “wrong” decision in a real life crisis will do so with zero moral implication on the rest of us. So unless we get reallyunlucky and happen to actually be that guy who stumbles on a train-lever situation, it’s ultimately someone else’s problem. The impossibility of perfecting human behavior means we have no moral imperative to try to do so.

In the case of robots, however, we have no such easy out. In theory, it is possible to perfect a robot’s actions from a moral perspective, to program an infallibly correct set of if-then instructions that will, to whatever extent possible, minimize pain and evil in the world. If a robot is ever presented with our hypothetical train-switch scenario (or if a self-driving car is presented with choosing one of two horrible possible outcomes of an oncoming crash), then our answers to these otherwise wanky philosophical questions become very important indeed. If we wimp out and refuse to come to a consensus on the tough moral questions for robots, as we mostly have for humans, we may actually be responsible when those robots allow or even assist evil all around us.

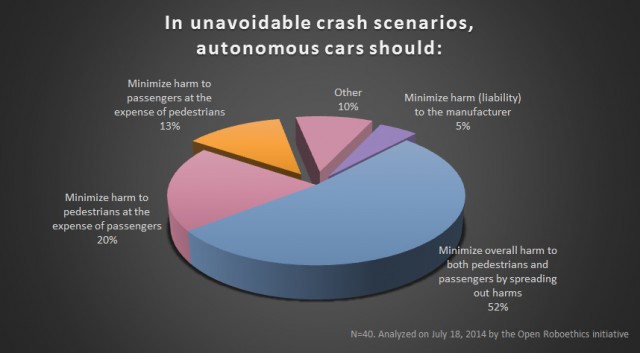

And yet, at their heart even these sorts of life or death considerations ought to be easy to dismiss through simple arithmetic: five is greater than one, and minimizing death is the obvious choice when we can outsource the actual lever-pulling to a robot, thus removing ourselves from culpability by an additional causal step. Open Roboethics conducted a poll on this issue, and the respondents had a spectrum of opinion on such coldly numerical ways of thinking. Right away we have a conflict, and one that could dramatically slow the release (or at least the sales) of self-driving cars. Who’s going to buy a car that would decide to kill you, your spouse, and your three kids to avoid forcing a school bus into a wall? Who’s going to buy a car that wouldn’t? Alternatively, who’s going to support a politician who wants one or the other standard to be enforced by law?

There are other, harder problems coming on quickly, scenarios that are difficult not because of our squeamishness about death but our conflicted understanding of the relative importance of different aspects of living. Should a robot hand a bottle with a big skull-n-crossbones on it to a disabled, diagnosed depressive who could never reach it themselves? Should a robot hand a bottle of booze to a disabled, severe alcoholic? Should a robot hand a cheeseburger to a disabled person with high blood pressure? Many people see these scenarios as subtly different, while many others see all three the same way.

If indeed the average home will someday contain at least one sophisticated robot, and that seems likely, the possibilities spiral quickly out of control. If you buy an elderly parent a robot to help them live, should the robot follow your idea of what “helping” means, or the parent’s? We can also imagine arguments in favor of mandatory robotic vigilance. It might begin with an always-on check for elderly people unable to get up after a fall, or for a kitchen gas leak — if the ability to prevent such things exists, is there an obligation to use it? This is roughly where the slippery slope arguments begin, and with good reason.

Whether it’s your car deciding to kill you instead of a gaggle of pedestrians, or a home care robot deciding to follow your doctor’s orders instead of your own, robot ethics are a chance for philosophers to get their hands dirty. Robot philosophy is, in a very real way, more dangerous than the human sort, since any decision it comes to isn’t recommended, but enforced. Most philosophers today can defend a losing point of view for decades if need be, right on till retirement — we’ll see how well that tactic works if different philosophies result in different measurable death tolls.

I (shockingly) have no answers. And neither does the Open Roboethics Initiative. The group is, however, compiling the information we’ll need to at least begin to have such discussions in an informed way. We must figure out how we feel about these issues, or at the very least how a majority of us feel about them. It’s long past time we saw for concerted efforts like this one to take off, and make no mistake — they’re going to become very important, very quickly. Make sure you take the time and weigh in on the group’s latest poll.

Author Graham Templeton

Permanent article address